2.2.

Wrap-up on optical data fusion methods

(Approx. 30 min reading incl. references)

The main objective of data fusion is to generate synthetic information of high spatiotemporal resolution to densify the annual satellite time series. Over the past three decades, various fusion techniques have been developed to combine data from multiple sources and improve the accuracy of crop phenology monitoring. These techniques can be broadly classified into traditional statistical approaches and data-driven approaches using Machine Learning (ML) and Deep Learning (DL).

Traditional statistical approaches, such as the Spatial and Temporal Adaptive Reflectance Fusion Model (STARFM) and its extensions (e.g., STAARCH, ESTARFM), use weighted linear combinations of fine- and coarse-resolution images to predict missing observations (Gao et al. 2006; Gao et al. 2015; Hilker et al. 2009; Li et al. 2015). These models are effective for homogeneous landscapes, but struggle in dynamic agricultural regions with abrupt land cover changes (Sisheber et al. 2022). The Flexible Spatiotemporal Data Fusion (FSDAF) overcomes these limitations (Zhu et al. 2016), but the computational effort increases (Liao et al. 2017).

With the rise of data-driven approaches, ML-based fusion methods (e.g., Random Forest, Support Vector Regression) have gained popularity in phenological studies (Chen and Zhang 2023; Cui et al. 2023; Raza et al. 2024). More recently, DL-based fusion methods (using e.g., Convolutional- and Recurrent Neural Networks, Generative Adversarial Networks) have shown superior performance in generating high-quality fused images (Amankulova et al. 2024; Cai et al. 2024; Chen et al. 2021; Luo et al. 2023; Pang et al. 2025). These models have the potential to capture long-term temporal dependencies and abrupt changes, making them particularly suited for crop phenological monitoring. However, it should be noted that they require extensive computational resources and large labelled datasets, which limits their operational applications (Zhang et al. 2023). Traditional and ML-based spatiotemporal fusion models focus on one or more image pairs relationships. Recent approaches integrate additional temporal information (prior/posterior observations) as auxiliary input variables. This improves prediction of synthetic images and robustness in time series analysis (Huang et al. 2024; Song et al. 2022; Tan et al. 2022; Xiong et al. 2022). The final choice of fusion technique depends on application-specific constraints, including data availability, computational efficiency, and the required accuracy for phenology monitoring.

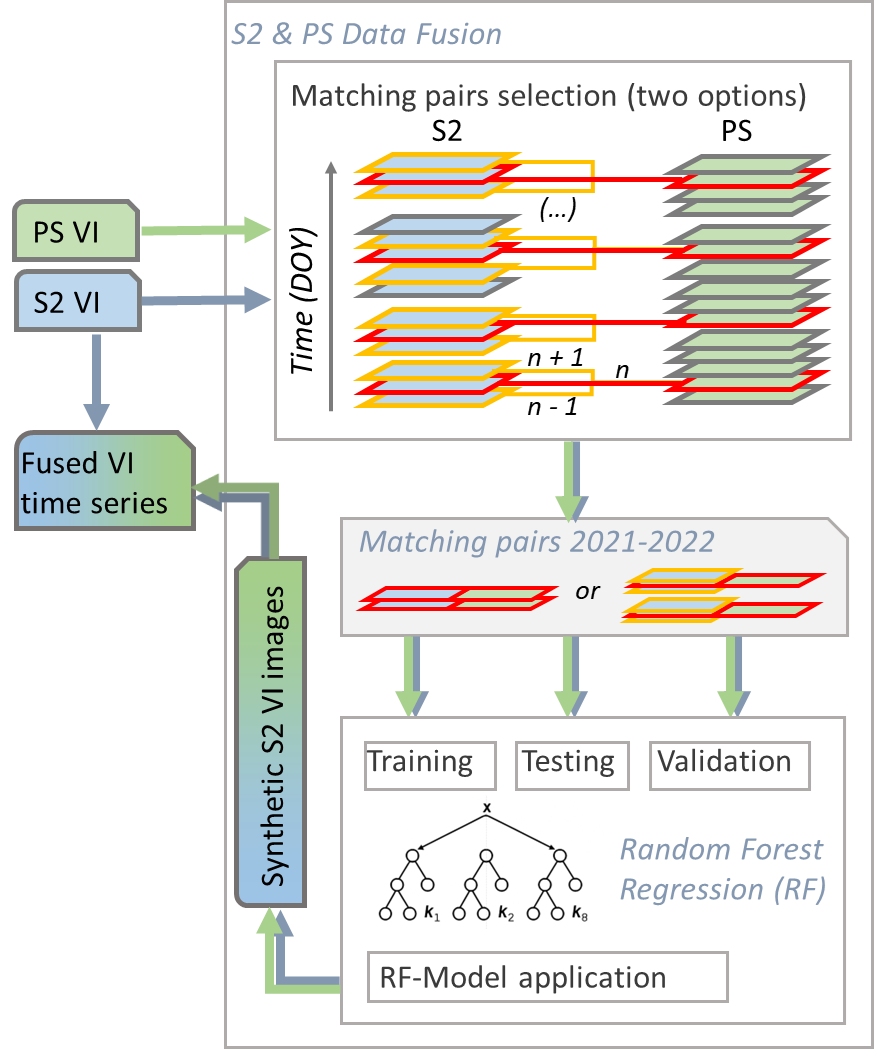

Denser time series have the potential to replicate more detailed seasonal phenology. The fusion task demonstrated in this lecture uses the PS data from 2021 and 2022 to densify the annual S2 time series at 10m spatial resolution. We examined three methods of S2 and PS data fusion: Use Nearest Neighbour-resampling, linear regression (LR) and random forest (RF) (Breiman 2001). The latter is a widely used method in the remote sensing community, mainly due to its ability to handle non-linearity, its high performance and its computational simplicity. In addition to the conventional fusion approach based on exact or near-exact acquisition data (±1 day) from S2-PS image pairs, an extended RF regression model was tested, in which the temporal content of the available S2 data was included as an additional input variable (Figure 3). This experimental approach uses one prior and one posterior S2 observation (relative to the PS–S2 match) as auxiliary input variables to RF model, providing a more detailed representation of the seasonal VI dynamic in the data.

References

- Amankulova, K., et al., (2024) A Novel Fusion Method for Soybean Yield Prediction Using Sentinel-2 and PlanetScope Imagery. Ieee Journal of Selected Topics in Applied Earth Observations and Remote Sensing. 17: p. 13694-13707.

- Breiman, L. (2001) Random forests. Machine Learning. 45(1): p. 5-32.

- Cai, Y.C., et al., (2024) A deep learning approach for deriving wheat phenology from near surface RGB image series using spatiotemporal fusion. Plant Methods. 20(1).

- Chen, B., J. Li, and Y.F. Jin, (2021) Deep Learning for Feature-Level Data Fusion: Higher Resolution Reconstruction of Historical Landsat Archive. Remote Sensing. 13(2).

- Chen, J. and Z. Zhang, (2023) An improved fusion of Landsat-7/8, Sentinel-2, and Sentinel-1 data for monitoring alfalfa: Implications for crop remote sensing. International Journal of Applied Earth Observation and Geoinformation. 124: p. 103533.

- Cui, R.H., et al., (2023) Crop Classification and Growth Monitoring in Coal Mining Subsidence Water Areas Based on Sentinel Satellite. Remote Sensing. 15(21).

- Gao, F., Masek, J.G., Schwaller, M., Hall, F. (2006) On the blending of the Landsat and MODIS surface reflectance: predicting daily Landsat surface reflectance. Geoscience and Remote Sensing, IEEE Transactions on 44, 2207 - 2218.

- Gao, F., et al., (2015) Fusing Landsat and MODIS Data for Vegetation Monitoring. Ieee Geoscience and Remote Sensing Magazine, 3(3): p. 47-60.

- Hilker, T., et al., (2009) A new data fusion model for high spatial- and temporal-resolution mapping of forest disturbance based on Landsat and MODIS. Remote Sensing of Environment, 113(8): p. 1613-1627.

- Huang, H., et al., (2024) STFDiff: Remote sensing image spatiotemporal fusion with diffusion models. Information Fusion. 111.

- Li, Q.T., et al. (2015) Object-Based Crop Classification with Landsat-MODIS Enhanced Time-Series Data. Remote Sensing. 7(12): p. 16091-16107.

- Liao, C.H., et al., (2017) A Spatio-Temporal Data Fusion Model for Generating NDVI Time Series in Heterogeneous Regions. Remote Sensing. 9(11).

- Luo, D., et al., (2023) Utility of daily 3 m Planet Fusion Surface Reflectance data for tillage practice mapping with deep learning. Science of Remote Sensing, 2023. 7: p. 100085.

- Pang, M.Q., et al., (2025) Sentinel-1/2 Image Fusion Coupled With CSR, GAN, and Temporal Phenology Feature Construction for Cropland Mapping. Ieee Transactions on Geoscience and Remote Sensing. 63.

- Raza, D., et al. (2024), Improved method for cropland extraction of seasonal crops from multisensor satellite data. International Journal of Remote Sensing, 45(18): p. 6249- 6284.

- Sisheber, B., et al. (2022) Tracking crop phenology in a highly dynamic landscape with knowledge-based Landsat-MODIS data fusion. International Journal of Applied Earth Observation and Geoinformation, 106.

- Song, Y.Y., et al., (2022) Remote Sensing Image Spatiotemporal Fusion via a Generative Adversarial Network With One Prior Image Pair. Ieee Transactions on Geoscience and Remote Sensing. 60.

- Tan, Z.Y., et al., (2022) A Flexible Reference-Insensitive Spatiotemporal Fusion Model for Remote Sensing Images Using Conditional Generative Adversarial Network. Ieee Transactions on Geoscience and Remote Sensing. 60.

- Xiong, S.P., et al., (2022) Fusing Landsat-7, Landsat-8 and Sentinel-2 surface reflectance to generate dense time series images with 10m spatial resolution. International Journal of Remote Sensing. 43(5): p. 1630-1654.

- Zhang, K., et al., (2023) Panchromatic and multispectral image fusion for remote sensing and earth observation: Concepts, taxonomy, literature review, evaluation methodologies and challenges ahead. Information Fusion. 93: p. 227-242.

- Zhu, X.L., et al., (2016) A flexible spatiotemporal method for fusing satellite images with different resolutions. Remote Sensing of Environment. 172: p. 165-177.