2.2.

Machine learning as a generic framework for spatial prediction

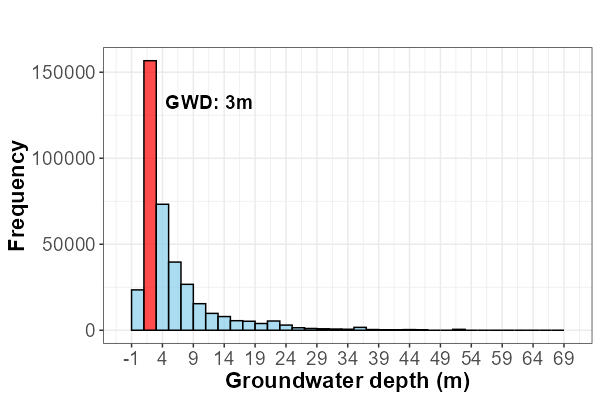

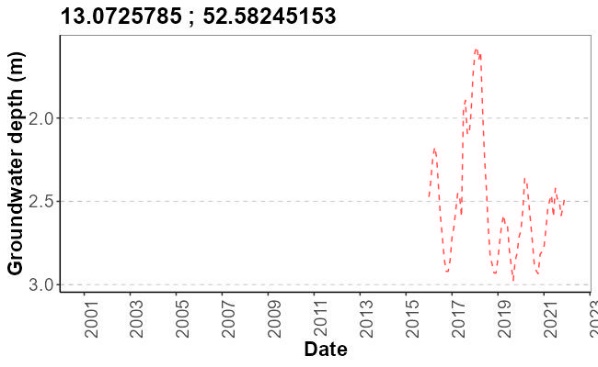

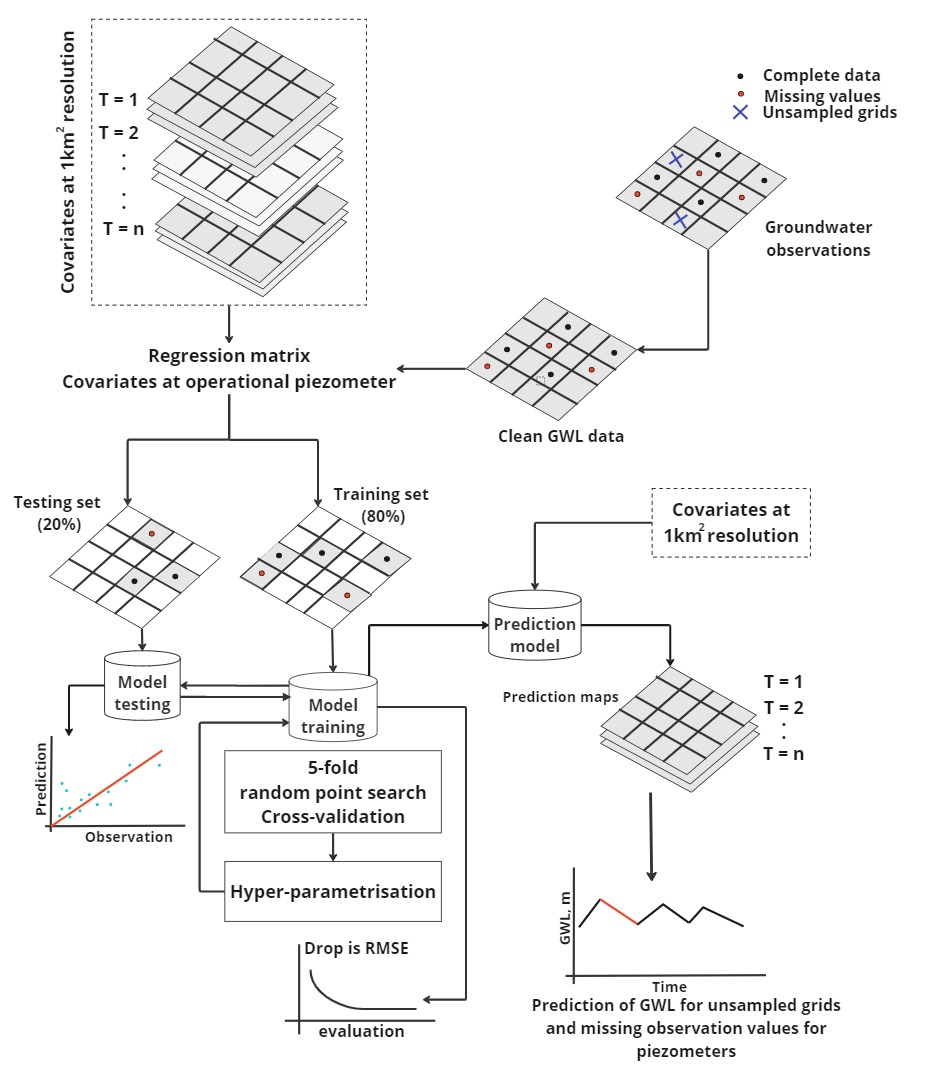

Despite major advancements in spatial data acquisition technologies, machine learning (ML) still faces fundamental challenges in obtaining representative and optimized training samples. The current shift from model-centric to data-centric ML emphasizes that model performance depends heavily on how well training and test samples represent the underlying spatial or spatiotemporal phenomenon. In spatial datasets, the structure and dependence between nearby samples often violate the assumption of independent and identically distributed (i.i.d.) data. Oversampling spatially close points can lead to redundancy and artificially inflated accuracy, while intra-class imbalance where samples within the same class are unevenly distributed across space biases the model’s predictions (Fig. 2 & 3). Similarly, inter-class imbalance reduces classification performance for less represented classes. Thus, careful spatial sampling design is crucial to ensure that the data reflect both spatial variability and class balance. Recent studies suggest that reinforcement learning can be used to guide adaptive spatial sampling, improving efficiency and reducing reliance on uniformly dense, computationally expensive data collection.

These sampling challenges directly influence the performance and reliability of commonly used spatial ML models such as Random Forest (RF), spatial Random Forest (RFsp), Random Forest for spatial interpolation (RFSI). Standard RF model assume independence among samples and may overfit when training data contain spatial redundancy or class imbalance. RFsp and RFSI extend the traditional RF framework by explicitly incorporating spatial coordinates, distance relationships, or spatial autocorrelation structures into the model, making them more robust to spatial dependence and improving generalization across heterogeneous landscapes (Fig. 4). However, even these spatially aware models depend on the quality and spatial representativeness of training samples poorly distributed or imbalanced samples can still bias predictions. Therefore, integrating optimized spatial sampling strategies, potentially guided by reinforcement learning, with models like RFsp, RFSI, offers a promising pathway to enhance predictive accuracy and spatial interpretability in geospatial applications. The following is a brief overview of each model:

Random Forest

Random Forest (Breiman, 2001) is an ensemble learning method that aggregates many decision trees to capture relationships between predictor variables and the target at sampled locations, and then generalizes to unsampled areas (Auret and Aldrich, 2012). Each tree is grown on a bootstrap sample (sampling with replacement) of the training data; observations not selected (out-of-bag, OOB) are used for internal cross-validation. The ensemble prediction at location x is the average of the individual tree predictions:

\[ \hat{Z}(x) = \frac{1}{B} \sum_{b=1}^B t_b^*(x)\]

Where Z_hat(x) is the prediction at x, B is the number of trees, b indexes the bootstrap replicates, and t_b is the b-th tree. Analyses were carried out with the randomForest implementation in R 4.2.3 (Wright and Ziegler, 2017).

Random Forest for Spatial Data

Random Forest for Spatial Data (RFsp; Hengl et al., 2018) extends standard RF to account for spatial dependence. Conventional RF ignores sampling locations and spatial structure, which can degrade performance when the response shows strong spatial autocorrelation or when point patterns are biased (Sekulić et al., 2020). RFsp augments predictors with spatial information to improve accuracy in such settings.

Conceptually, the response at location \(s\) can be written as:

\[ Y(s) = \int \left( X_G, X_R, X_P \right)\]

where \(X_R\) are reflectance-based covariates from remote sensing, \(X_P\) are process-based variables (e.g., topographic wetness index, terrain ruggedness index), and \(X_G\) encodes geographic proximity and spatial relationships among observations.

One formulation is:

\[ X_G = \left( d_{p1}, d_{p2}, d_{p3}, \ldots, d_{pN} \right)\]

where \(d_{p1}\) is the buffer distance between prediction site \(s\) and observed site \(p_i\), and \(N\) is the number of training points. To capture terrain-driven spatial variation, we used the R package landmap to derive complex distance measures (Hengl, 2020).

Random Forest for Spatial Interpolation

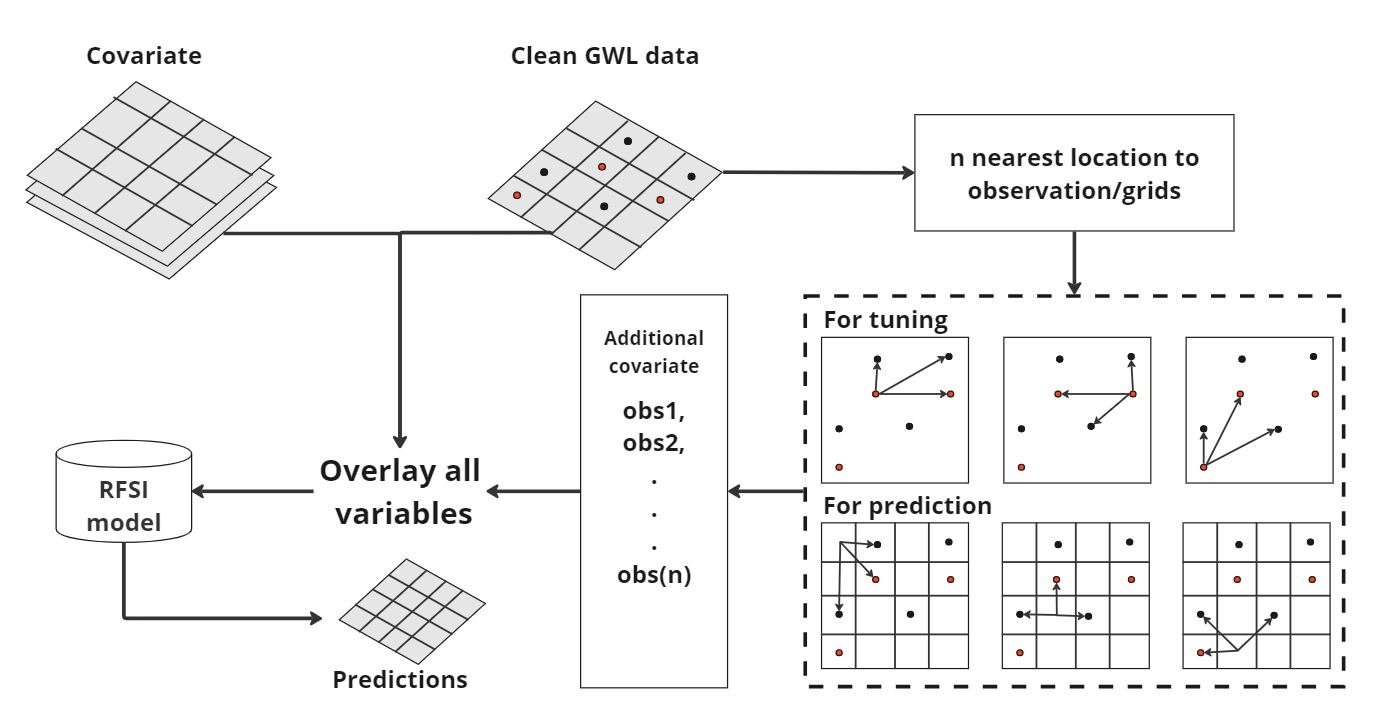

Random Forest for Spatial Interpolation (RFSI; Sekulić et al., 2020) is a more recent RF-based approach to spatial interpolation that incorporates local neighborhood context. The model adds covariates representing:

- The observed values at the \(n\) nearest sampled locations

- Their distances to the prediction site (Fig. 5)

Predictions are defined as:

\[ \hat{Z}(S_0) = \int \big( x_1(S_0), \dots, x_m(S_0), z(S_1), d_1, z(S_2), d_2, z(S_3), d_3, \dots, z(S_n), d_n \big)\]

where:

- \(\hat{Z}(S_0)\) is the prediction at location \(S_0\)

- \(x_i(S_0)\) (\(i = 1, \dots, m\)) are environmental covariates at \(S_0\)

- \(z(S_i)\) is the observed response at the \(i\)-th nearest location \(S_i\)

- \(d_i = |S_i - S_0|\) is the distance between \(S_i\) and \(S_0\)

These spatial covariates are combined with the environmental predictors to inform the model (Hengl et al., 2018).