1.5.

Introduction to spatial k-fold cross-validation for robust evaluation of limited data

(Approx. 10 min reading)

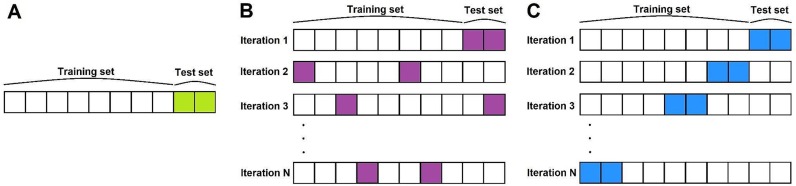

Evaluating the performance of a machine learning model is a critical step in any remote sensing workflow. However, in spatial applications like agriculture, traditional evaluation methods often fail to reflect real-world conditions. A common approach in machine learning is k-fold cross-validation, where the data is randomly split into k equal parts or “folds,” and the model is trained on k-1 folds and validated on the remaining one. This process is repeated k times, with each fold serving as the validation set once. While this method is statistically robust in many domains, it can be misleading in geospatial contexts due to a phenomenon known as spatial autocorrelation (Fig. 6).

In remote sensing, nearby pixels or fields tend to be more similar to each other than to distant ones. This spatial dependence means that randomly splitting the dataset can result in training and test data that are spatially close and thus highly correlated. As a result, the model may appear to perform well simply because it is being tested on areas that are very similar to the ones it was trained on. This can lead to inflated performance metrics and a false sense of model reliability. In reality, when the model is deployed to a new region or growing season, its performance may degrade significantly if spatial autocorrelation was not properly accounted for during validation.

To address this problem, spatial k-fold cross-validation has emerged as a more appropriate method for evaluating models in geospatial settings. Instead of randomly splitting the data, spatial cross-validation divides the study area into spatially distinct regions or blocks. The model is trained on data from some of these regions and validated on the remaining ones. This ensures that the validation set is geographically independent from the training data, providing a more realistic estimate of how the model will perform in unseen areas.

There are several ways to implement spatial cross-validation. One common method is to divide the study area into a regular grid or tiles, ensuring that each fold corresponds to a distinct spatial block. Another approach uses clustering algorithms based on geographic coordinates to group nearby samples into folds. In agricultural remote sensing, these folds might represent different administrative zones, ecological regions, or randomly sampled spatial blocks. The key idea is to preserve the spatial integrity of the data within each fold while ensuring that the training and testing regions do not overlap. Spatial k-fold cross-validation is particularly important when working with small datasets. In such cases, overfitting is a serious concern, and traditional random splits can obscure the model’s inability to generalise. By testing the model on geographically separate data, spatial cross-validation reveals how well the model can handle variability across different landscapes, soil types, crop practices, or climate zones. This is especially relevant in national-scale monitoring systems or cross-border agricultural assessments, where spatial generalisation is essential.

In addition to providing more realistic performance estimates, spatial cross-validation can also guide model development. If a model consistently performs poorly in certain regions, this may indicate a need for additional training data, domain adaptation techniques, or the inclusion of local knowledge. Spatial validation results can also inform decision-makers about where the model is most reliable and where caution is needed. This transparency is crucial when deep learning models are used to inform policy, allocate resources, or support sustainable farming practices.

Another consideration is computational cost. Spatial cross-validation often involves training the model multiple times, which can be time-consuming, especially with deep learning architectures. However, the trade-off is generally worthwhile, given the improved reliability of model evaluation. In fact, the use of spatial validation is increasingly being recognised as best practice in geospatial machine learning and is now recommended in many peer-reviewed journals and policy-driven research initiatives.

In summary, spatial k-fold cross-validation addresses one of the core challenges in applying deep learning to remote sensing: ensuring that model evaluation reflects real-world deployment scenarios. By separating training and test data geographically, it provides a more honest assessment of generalisation performance. This is especially important in agricultural contexts, where models are often applied across diverse and heterogeneous landscapes. Incorporating spatial cross-validation into model workflows helps build trust in model outputs, guides improvements, and supports robust, data-efficient remote sensing applications in agriculture.