1.3.

Overview of emerging Deep Learning techniques for small data in Remote Sensin

(Approx. 30 min reading)

Deep learning has revolutionised the way we analyse remote sensing data. In recent years, convolutional neural networks (CNNs), recurrent networks, and more recently transformer-based architectures have demonstrated state-of-the-art performance in tasks such as land cover classification, crop type mapping, yield prediction, and vegetation monitoring. These models have proven highly effective when trained on large, annotated datasets. However, in real-world agricultural and socio-environmental applications, access to such datasets is limited. The availability of high-quality, labeled ground truth data is often constrained due to cost, time, access, privacy, or environmental heterogeneity. As a result, many researchers and practitioners find themselves working in what is known as a small data regime. In response to these constraints, the deep learning community has developed a range of techniques aimed specifically at improving model performance when labeled data are scarce. This section provides an overview of ten such techniques, each of which has emerged as a promising solution to the small data problem in remote sensing.

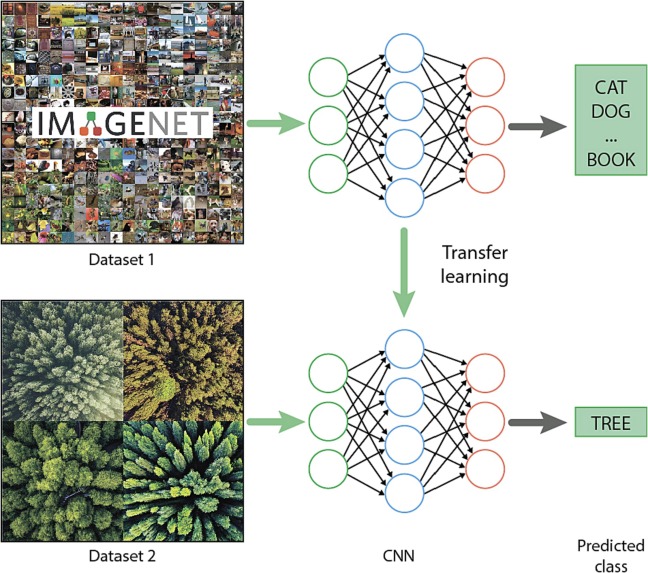

The first technique is transfer learning. It involves taking a model that has been pretrained on a large dataset and adapting it to a new but related task. In practice, this means using models trained on large-scale image datasets such as ImageNet or remote sensing datasets like BigEarthNet as a foundation. The pretrained model already possesses a rich set of learned visual features, which can then be fine-tuned on a much smaller target dataset. In the context of agricultural remote sensing, transfer learning is widely used to classify crop types, detect field boundaries, or monitor land use changes when only limited training samples are available. By leveraging knowledge acquired from broader domains, transfer learning reduces the need to train models from scratch and mitigates the risks of overfitting that often accompany small datasets (Fig. 2).

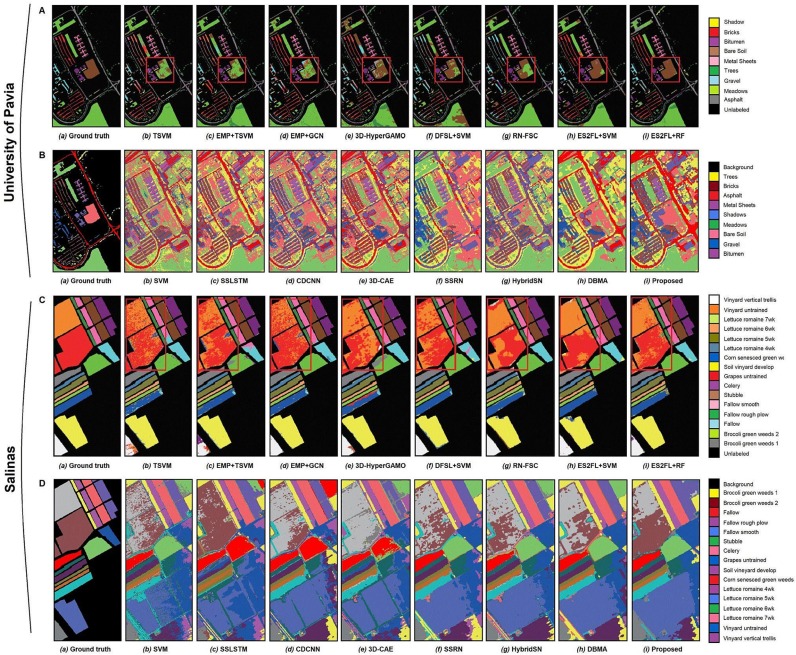

Another widely adopted method is self-supervised learning. Unlike traditional supervised learning, which depends on labeled data, self-supervised learning extracts supervisory signals directly from the data itself. This is done by creating artificial tasks, known as pretext tasks, that the model learns to solve. These tasks can include predicting missing image patches, solving spatial jigsaw puzzles, or identifying the temporal order of satellite image sequences. Once a model has learned useful representations through these pretext tasks, it can be fine-tuned on a small labeled dataset to perform the actual task of interest. In remote sensing, self-supervised learning has proven effective in capturing meaningful patterns from massive archives of unlabelled satellite imagery (Fig. 3). For agricultural applications, it enables models to learn from temporal dynamics, spatial textures, and spectral patterns without requiring ground-truth data at scale. The representations learned in this way are often robust and transferable, particularly in time-series analysis and crop monitoring.

Semi-supervised learning is a related strategy that combines a small amount of labeled data with a large pool of unlabeled data. In agricultural remote sensing, it is common to have sparse annotations for certain crops or regions, while having extensive image coverage. Semi-supervised methods can use techniques like pseudo-labeling, where a model trained on labeled data is used to predict labels for the unlabeled samples. These predictions are then incorporated into the training process to improve performance iteratively. Another approach involves enforcing consistency in predictions across augmented versions of the same data, thereby improving the stability and reliability of the model. Semi-supervised learning helps bridge the gap between the abundance of unlabelled satellite images and the scarcity of ground-truth annotations, allowing for more effective model training without a proportional increase in manual labeling efforts.

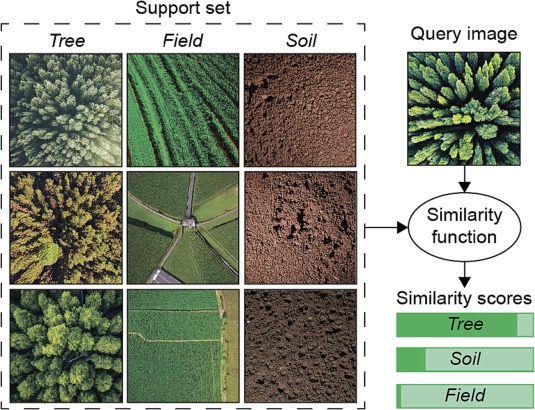

Few-shot learning offers a different approach by enabling models to recognize new classes from only a handful of labeled examples (Fig. 4). It is particularly relevant in agricultural applications where rare crops, unusual land management practices, or specific ecological features are underrepresented in the training set. Few-shot learning frameworks are designed to generalize quickly with minimal supervision. They typically use meta-learning or similarity-based architectures to learn how to learn, focusing on extracting features that are transferable across different tasks. In a typical setup, the model is trained to distinguish between classes using only one or a few examples per class and to adapt rapidly when introduced to novel categories. This makes few-shot learning especially powerful in cases where labeled data collection is prohibitively difficult or expensive.

Zero-shot learning extends this idea further by allowing models to make predictions for categories that were never seen during training. This is achieved by connecting visual inputs to semantic information, such as textual descriptions or structured knowledge about the target classes. In remote sensing, zero-shot learning might be used to identify new land cover types or crop species in a region where no training samples exist but where some descriptive information is available. This could include plant phenology, growth conditions, or spectral characteristics drawn from expert knowledge or external databases. Zero-shot learning is a promising approach for improving model generalisability, particularly in rapidly changing agricultural landscapes or when attempting to scale models globally without collecting new labels for every region.

Active learning takes a more iterative and interactive approach to data collection. Instead of labeling a random sample of data, the model actively selects the most informative or uncertain samples to be annotated by a human expert. This targeted labeling strategy ensures that each new label contributes maximally to model improvement. In the agricultural context, this can dramatically reduce the number of labeled examples needed to reach a desired level of accuracy. Active learning workflows often include a loop in which the model is trained, queried, updated with new labels, and retrained, gradually improving over time. This method is particularly well-suited for operational settings where annotation costs are high and expert time is limited.

Weakly supervised learning refers to approaches that train models using labels that are coarse, incomplete, or noisy. These might include region-level labels, indirect indicators, or approximations based on ancillary data. For example, instead of having a pixel-wise crop label, the model may be trained on field-level crop information or administrative statistics. While such labels are less precise, they are often much easier to obtain and can still provide valuable supervision. Weak supervision is especially important in agricultural remote sensing, where data from farmers, government reports, or crowd-sourced platforms may be available but lack fine spatial resolution. These approaches require models that are robust to label noise and capable of learning from imprecise information.

Multitask learning addresses small data problems by training models to perform multiple related tasks simultaneously. In doing so, it allows different tasks to share information through a common representation. In remote sensing, this might involve training a model to simultaneously classify land cover, estimate vegetation indices, and predict crop yield. The rationale is that by learning shared patterns across tasks, the model can generalize better, particularly when each task alone has limited labeled data. In agriculture, multitask learning can improve accuracy in tasks like crop mapping by incorporating auxiliary information such as phenological stages or climatic variables. This also provides a form of regularization, helping to reduce overfitting.

Process-aware learning is a more recent and emerging area of deep learning research. It integrates domain knowledge and physical processes into the architecture or training procedure of the model. Instead of learning purely from data, process-aware models are constrained or guided by what is already known about the underlying systems. In agriculture, this might involve incorporating crop growth models, weather dynamics, or soil moisture balances into the learning framework. These constraints help the model to make predictions that are physically plausible and ecologically meaningful, even when labeled data are limited. This approach can improve interpretability and trust, particularly in scientific and policy-oriented applications where model outputs need to be explainable and grounded in theory.

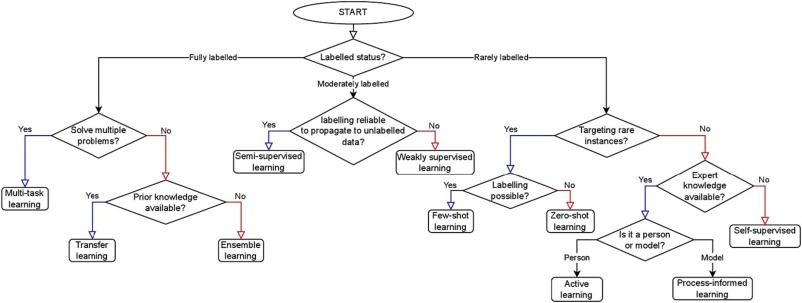

Finally, ensemble learning involves training multiple models and combining their predictions to improve robustness and performance. Ensembles can include different model architectures, training subsets, or random initializations. In a small data context, ensembles help reduce variance and compensate for the limited capacity of individual models to generalize. They are particularly effective in providing uncertainty estimates, which are valuable when working with scarce and noisy data. In agricultural remote sensing, ensemble methods are often used to combine predictions from models trained on different seasons, regions, or data sources, resulting in more stable and reliable outputs. Each of these ten deep learning strategies addresses a different facet of the small data problem. Some, such as self-supervised and semi-supervised learning, focus on leveraging the vast amounts of unlabeled data that are readily available from satellite archives. Others, such as few-shot and zero-shot learning, aim to reduce the reliance on labeled data altogether by enabling models to learn quickly or generalize beyond their training scope. Techniques like active learning and weak supervision optimize the use of limited labeling resources, while multitask and process-aware learning improve model performance by incorporating additional structure or external knowledge. Ensemble learning serves as a final layer of robustness, helping to stabilise predictions and quantify uncertainty.

This integrated perspective is visually captured in Fig. 5 by Safonova et al. (2021), reproduced below, which highlights the unique contributions of each method to addressing the small data challenge. The figure illustrates how each technique supports specific strategies, such as reducing the need for labeled data, incorporating prior knowledge, and improving generalisation performance.

For further clarity, the table below summarises the main contributions of each technique as they relate to unlabeled data use, labeling efficiency, incorporation of prior knowledge, and generalisation potential.

| Technique | Uses unlabeled data | Reduces labeling needs | Integrates prior knowledge | Improved generalization |

|---|---|---|---|---|

| Transfer learning | ✔️ | ✔️ | ✖️ | ✔️ |

| Self-supervised learning | ✔️ | ✔️ | ✖️ | ✔️ |

| Semi-supervised learning | ✔️ | ✔️ | ✖️ | ✔️ |

| Few-shot learning | ✖️ | ✔️ | ✖️ | ✔️ |

| Zero-shot learning | ✖️ | ✔️ | ✔️ | ✔️ |

| Active learning | ✖️ | ✔️ | ✖️ | ✔️ |

| Weekly supervised learning | ✖️ | ✔️ | ✖️ | ✔️ |

| Multitask learning | ✖️ | ✖️ | ✖️ | ✔️ |

| Process-aware learning | ✖️ | ✔️ | ✔️ | ✔️ |

| Ensemble learning | ✖️ | ✖️ | ✖️ | ✔️ |

As deep learning continues to evolve, these strategies will remain essential for applying AI to global environmental challenges. In the context of agriculture, they not only improve the technical performance of models but also enable broader participation and fairness by reducing dependency on expensive and regionally concentrated labeled datasets. This opens the door for scalable, data-efficient, and context-aware remote sensing models that can support sustainable agriculture across diverse geographies.