1.3.

Overview of spatiotemporal data and its relevance in spatial interpolation

The increasing availability of spatial and temporal data across environmental sciences has transformed the way researchers analyze and predict dynamic earth system processes. Spatiotemporal datasets, which integrate both spatial and temporal dimensions, are now fundamental to understanding how environmental variables evolve over time and across space. Their use has become particularly significant in hydrology, ecology, climatology, and agricultural monitoring, where processes such as groundwater fluctuation, soil moisture variation, and vegetation growth exhibit strong spatiotemporal dependency (Hengl et al., 2018; Georganos et al., 2021).

1. Spatiotemporal Data and the R ‘spacetime’ Framework

The spacetime package developed by Pebesma (2012) provided a milestone in R-based spatial data analysis by introducing formal structures for handling datasets with both spatial geometries and temporal indices. It builds upon the sp, raster, and xts packages to create integrated classes that link spatial objects (points, polygons, grids) with corresponding time series data. This framework enables efficient management, visualization, and analysis of spatiotemporal phenomena such as precipitation trends, temperature anomalies, or groundwater level dynamics.

The package also supports space–time kriging and covariance modeling, essential for analyzing non-stationary processes where spatial correlations vary through time. More recent tools such as stars and terra have expanded these functionalities, improving computational efficiency and compatibility with high-resolution satellite products. These R-based systems have made it possible to conduct large-scale environmental modeling using open-source and reproducible workflows (Pebesma & Bivand, 2023).

2. Relevance of Spatial Information in Spatial Interpolation

Spatial interpolation is a fundamental geostatistical process that estimates values at unsampled locations based on the spatial autocorrelation of observed data. The inclusion of spatial information specifically, distance, direction, and spatial neighbourhood relationships directly influences model accuracy and predictive reliability. Tobler’s First Law of Geography (everything is related to everything else, but near things are more related than distant things) underpins this concept. Neglecting spatial dependence typically results in over smoothed or biased interpolations that fail to represent local heterogeneity (Varouchakis et al., 2012; Karami et al., 2022).

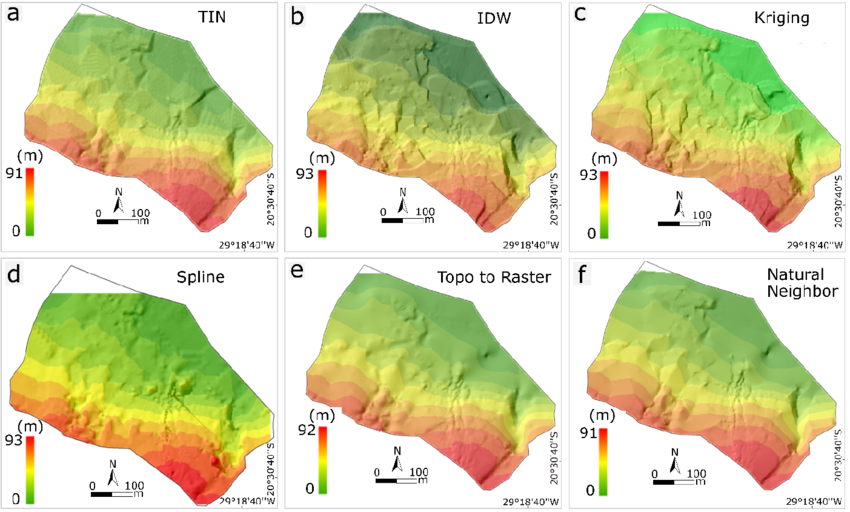

Spatial and spatio-temporal interpolation of natural and socio-economic variables is widely used across many scientific disciplines. Common interpolation methods (Fig. 2) include nearest neighbour (NN), inverse distance weighting (IDW), and trend surface mapping (TS). In the 1980s, geostatistical interpolation, known as kriging, was introduced, representing a significant advancement because it accounts for spatial correlation and provides an estimate of interpolation uncertainty via the kriging standard deviation. Kriging is recognized as the Best Linear Unbiased Predictor (BLUP) for spatial data under certain stationarity assumptions. Its flexibility is further enhanced by numerous variants that can address specific cases, such as anisotropy, non-normality, and the inclusion of covariate information.

However, kriging also has limitations. It can be computationally intensive, relies on several assumptions, and developing an appropriate geostatistical model for diverse data types can be challenging. Additionally, incorporating large amounts of covariate information is often difficult. A key challenge arises when the data cannot be easily transformed to normality. To address this, indicator kriging was developed; however, it is cumbersome and not fully model-based, meaning it does not rely on a complete statistical framework. The Generalized Linear Geostatistical Model is statistically rigorous but remains limited in the distributions it can handle and is technically complex. For instance, modeling variables with many zeros and extreme values, such as precipitation, is particularly challenging.

While annual or monthly precipitation data, even in arid regions, may include zeros and exhibit strong positive skewness, kriging performs better on temporally aggregated data because it tends to approximate normality. In contrast, daily or hourly precipitation exhibits higher spatial variability, questionable stationarity, skewed distributions, and many zeros, making kriging less suitable. Similar challenges arise when interpolating air quality indices or pollutant concentrations in surface and groundwater. Under these conditions, kriging may not be the most appropriate method.

3. Types of Spatial Data and Associated Challenges

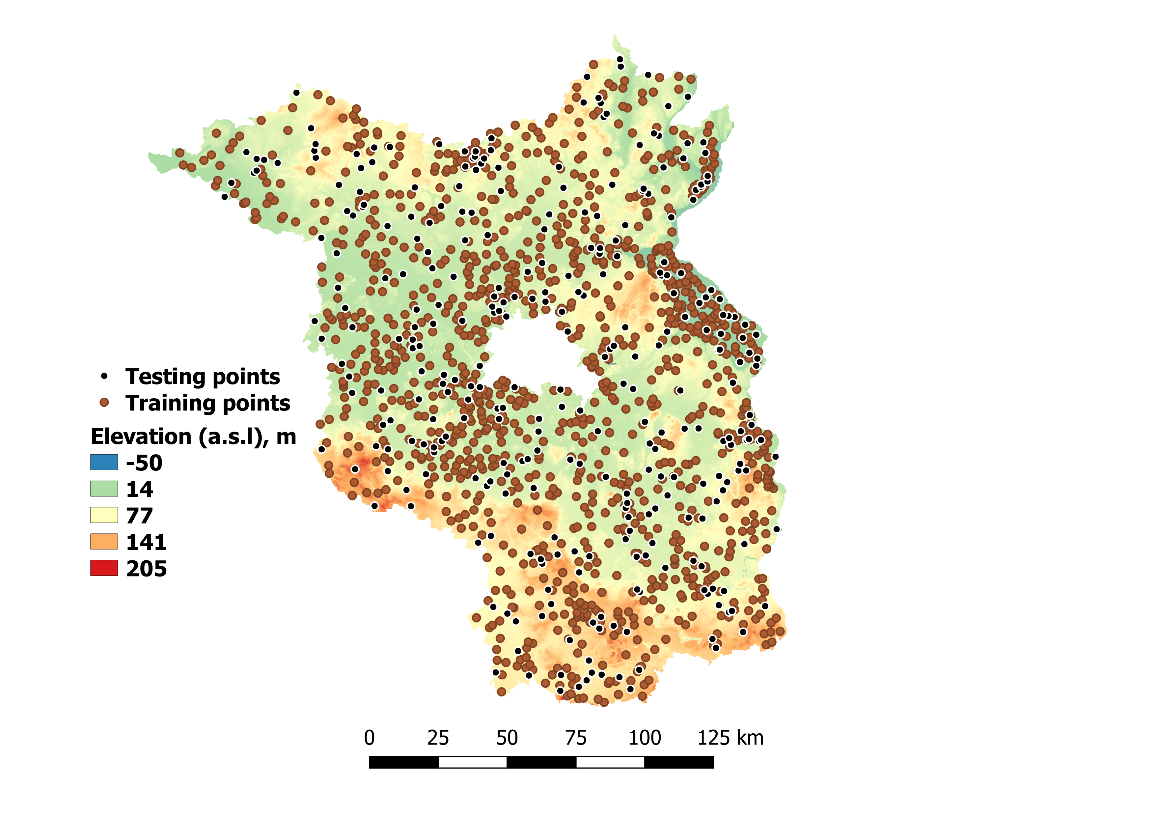

Spatial datasets are typically categorized into vector, raster, and point data, each presenting unique analytical challenges. Vector data (points, lines, polygons) effectively represent discrete geographical features but are limited in depicting continuous spatial variation. Raster data, consisting of regular grid cells, are ideal for continuous phenomena but pose trade-offs between resolution and computational cost. Point data, such as measurements from weather stations or piezometers, provide direct observations (Fig. 3) but are often unevenly distributed, leading to interpolation uncertainty and potential sampling bias (Cui et al., 2016).

A persistent challenge lies in integrating multi-source spatial data collected at differing scales, resolutions, and temporal frequencies. For instance, harmonizing in-situ measurements with satellite-based observations (e.g., Sentinel-1/2, PlanetScope) requires rigorous preprocessing, coordinate transformation, and spatial alignment. Furthermore, sensor noise, data gaps, and differing temporal acquisition intervals complicate spatiotemporal interpolation and model validation.

4. Challenges in Spatiotemporal Data Modeling

The analysis of spatiotemporal datasets presents both methodological and computational challenges. One of the major issues is data sparsity, particularly in temporal dimensions where long-term continuous observations are rare. Missing data or irregular sampling complicates space–time modeling and increases uncertainty. Computational intensity is another limitation, as high-resolution datasets especially those derived from remote sensing demand significant processing power for model fitting and validation (Hengl et al., 2018).

Model selection also plays a crucial role. Choosing appropriate variogram models or covariance functions for space–time kriging requires expertise and often subjective judgment, particularly when dealing with non-stationary or anisotropic processes. Furthermore, uncertainty quantification remains an open challenge. Traditional geostatistical methods provide error variance estimates, but advanced ML-based spatial interpolators lack standardized uncertainty metrics (Zhu et al., 2022).

Recent developments have focused on hybrid spatiotemporal models, combining statistical and machine learning methods. For example, the integration of Random Forest algorithms with spatial weighting matrices or kriged residuals (RF+Kriging) enhances predictive performance while maintaining interpretability (Hengl et al., 2018). Similarly, the Geographically Weighted Random Forest (RFgw) approach by Georganos et al. (2021) introduces local calibration, allowing the model to adapt spatially to regional heterogeneity. These advances indicate a broader paradigm shift toward models that merge spatial dependence with data-driven learning capacity.

5. Synthesis and Emerging Trends

The literature increasingly emphasizes the need for integrated frameworks that combine the strengths of different modeling approaches. Physically based models offer process understanding, geostatistical methods provide spatial structure, and machine learning offers flexibility and scalability. The combination of these paradigms through hybrid modeling frameworks has proven to be the most effective strategy for achieving reliable spatial interpolation and prediction in environmental sciences (Sekulić et al., 2020; Hengl et al., 2018).

Moreover, open-source platforms such as R and Python, together with cloud-based processing systems like Google Earth Engine, have revolutionized how large-scale spatiotemporal data are managed and analyzed. The increasing interoperability among tools (spacetime, stars, terra, gstat) allows researchers to create reproducible workflows that bridge the gap between field measurements, remote sensing, and predictive modeling.

In conclusion, spatiotemporal data are indispensable for modern environmental analysis, providing the foundation for dynamic and scalable spatial interpolation. The integration of spatial information, temporal dependencies, and advanced computational frameworks continues to drive progress in environmental modeling, promoting more accurate, data-informed decision-making in natural resource management.