1.2.

Challenges in complete and accurate Groundwater Assessments

- Explain the major challenges in achieving complete and accurate groundwater assessments.

- Differentiate between physically based, conceptual, geostatistical, and machine learning models used in groundwater modeling.

- Identify the sources of uncertainty in hydrological models and understand the concept of equifinality.

- Discuss the “scale problem” and its implications for model design and performance.

- Evaluate the limitations of non-spatial machine learning models and describe how spatially explicit methods can improve predictions.

Groundwater assessments are fundamental for sustainable water management; yet achieving complete and accurate evaluations remains a major scientific and operational challenge. These challenges arise from data limitations, model structure uncertainty, scale mismatches, and methodological shortcomings in both physically based and data-driven modeling approaches. The following discussion highlights critical problem areas: the constraints of physically based models, the limitations of linear and geostatistical methods, the non-spatial nature of many machine-learning models, and the structural uncertainties inherent to hydrological model design.

1. Limitations of Physically Based Models

Physically based groundwater models, such as MODFLOW or other distributed hydrological frameworks, aim to simulate the physical processes that govern groundwater flow and recharge. While such models are theoretically robust, their practical accuracy depends heavily on the availability and reliability of spatial and temporal data describing aquifer geometry, hydraulic properties, recharge rates, and boundary conditions (Singh, 2014; Ahmed et al., 2020). In most real-world applications, these parameters are poorly constrained or only partially measured, particularly in large and heterogeneous basins. Consequently, modelers must rely on simplifying assumptions, which reduce physical realism and lead to biased estimates of recharge or discharge.

Moreover, physically based models require intensive calibration, and because multiple parameter sets can produce similar outputs a phenomenon known as equifinality the models often lack unique solutions and transferability across different hydrological contexts (Beven, 2001; Aliyari et al., 2019). This dependence on calibration and the limited ability to generalize undermine confidence in their predictive power. Even when structural processes are well represented, the lack of high-quality spatial data restricts model reliability, especially when scaling from local to basin or transboundary levels. Furthermore, model complexity increases computational cost and may amplify parameter uncertainty, particularly when new processes are added without sufficient data support (Passioura, 1996; Deng & Bailey, 2020).

2. Structural Uncertainty and Scale Problems in Hydrological Modeling

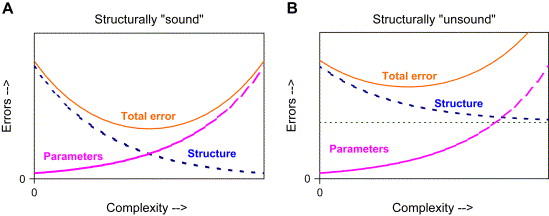

All hydrological models whether lumped, distributed, deterministic, or stochastic face structural and conceptual limitations (Fig.1). Lumped models simplify catchments into single or few storage units, ignoring spatial heterogeneity, while distributed models attempt to resolve this heterogeneity but require large datasets that are seldom available (Beven, 2001). Conceptual and empirical models depend heavily on calibration data, whereas physically based models, despite their process orientation, still require calibration due to unmeasurable parameters and unresolved internal heterogeneity (Grayson et al., 1992; Ye et al., 1997).

Increasing model complexity often reduces structural uncertainty but simultaneously increases parameter uncertainty. If the model’s structural foundation is flawed, additional complexity only compounds error without improving accuracy. This is reflected in the problem of non-uniqueness, where multiple model configurations can fit observed data equally well but for different physical reasons (Oreskes et al., 1994). The “scale problem” also persists: hydrological processes occur across multiple scales, yet models discretize them into limited spatial and temporal resolutions, leading to aggregation errors and mismatches between modeled and real-world processes. Consequently, even the most advanced models while computationally powerful cannot eliminate the need for reliable observations and uncertainty quantification.

3. Limitations of Linear and Geostatistical Methods

Linear and geostatistical methods have long been applied to interpolate groundwater levels and hydraulic parameters. Classical kriging and cokriging approaches assume spatial stationarity and Gaussian distribution, which rarely hold true in heterogeneous aquifer systems influenced by pumping, climate variability, and land use change (Varouchakis et al., 2012; Karami et al., 2022). This can result in overly smoothed predictions that underrepresent local anomalies and extremes. Additionally, reliable variogram estimation, central to kriging, becomes difficult in cases of sparse or unevenly distributed data (Cui et al., 2016). Stochastic simulations such as Sequential Gaussian Simulation (SGS) can better preserve local variability, but they increase computational costs and depend on restrictive statistical assumptions (Mariethoz & Caers, 2014). As spatial and temporal resolutions of monitoring networks improve, these methods become even more data- and computation-intensive, limiting their applicability at regional scales (Hengl et al., 2018).

4. Non-Spatial Nature and Limitations of Machine Learning Models

Recent advances in machine learning (ML) have introduced new opportunities for groundwater modeling. Algorithms such as Random Forests (RF), Gradient Boosting Machines (GBM), and Artificial Neural Networks (ANNs) have demonstrated strong predictive capabilities by capturing complex, nonlinear relationships between inputs and groundwater responses (Zanotti et al., 2019; Rahman et al., 2020). However, traditional ML models often neglect the spatial dependency inherent in hydrological systems, treating all samples as independent observations (Hengl et al., 2018; Brenning, 2023). This assumption violates spatial autocorrelation principles and can produce biased estimates, especially when data points are spatially clustered.

Furthermore, ML models tend to perform poorly when extrapolating beyond their training domain, making them less suitable for unmonitored or data-scarce regions (Takoutsing & Heuvelink, 2022). Temporal data scarcity such as biannual piezometer measurements in many developing regions also hinders the ability of ML models to learn long-term aquifer dynamics (MacAllister et al., 2022). To overcome these issues, spatially explicit ML frameworks, including Geographically Weighted Random Forests (GW-RF) and hybrid spatial ML models, have been developed to integrate spatial context into the learning process (Georganos et al., 2021). These approaches enhance prediction accuracy and uncertainty quantification by explicitly modeling neighbourhood dependencies and spatial autocorrelation.

Overall, each modeling paradigm physically based, geostatistical, or machine learning provides valuable insights but also carries inherent limitations. Physically based models offer mechanistic understanding but suffer from data dependency and computational burden. Geostatistical models are efficient and interpretable but rely on restrictive statistical assumptions that limit their realism. Machine learning approaches deliver flexibility and predictive power but often ignore spatial structure, leading to biased and non-generalizable outcomes. The emerging consensus in hydrogeological research points toward hybrid, spatially informed modeling frameworks that combine physical understanding, geostatistical rigor, and machine learning adaptability (Hengl et al., 2018; Georganos et al., 2021; Sekulić et al., 2020). Integrating these paradigms, supported by high-quality spatial and temporal data, represents the most promising path toward complete and accurate groundwater assessments.

Discussion Questions

- Why do physically based models, despite their strong theoretical foundation, often fail to provide accurate groundwater assessments in data-scarce regions?

- How does model complexity influence structural and parameter uncertainty?

- In what ways do geostatistical methods like kriging oversimplify real groundwater processes?

- What are the key drawbacks of using non-spatial machine learning models in hydrology, and how can these be addressed?

- How could hybrid or spatially informed machine learning approaches help bridge the gap between process understanding and data-driven prediction?

- Considering model uncertainty and scale issues, what balance should be struck between model sophistication and data availability?