4.2.

Image Mapping, Train Test Dataset Creation

Image Mapping

The initial step involves image mapping to identify Sentinel-1 (SAR) and Sentinel-2 (Optical) scenes that were captured on the same days and cover the same geographic area. This co-registration is crucial for two reasons:

- It ensures the availability of complete, complementary data pairs for training the model.

- It allows for the validation of the predicted synthetic NDVI (Normalized Difference Vegetation Index) against the actual NDVI derived directly from the co-registered Sentinel-2 data.

This mapping process generates a list of overlapping image pairs, which is then saved as a CSV file. These can be done by running the Jupyter notebook 0_4_S1_S2_mapping.ipynb in the folder ./1_Preparation/.

Train, Test, and Validation Split

Following mapping, the list of co-registered images must be split into training, testing, and validation datasets. To ensure the trained model is robust and generalizes well, the dataset must be balanced. For agricultural phenology applications, balance requires considering:

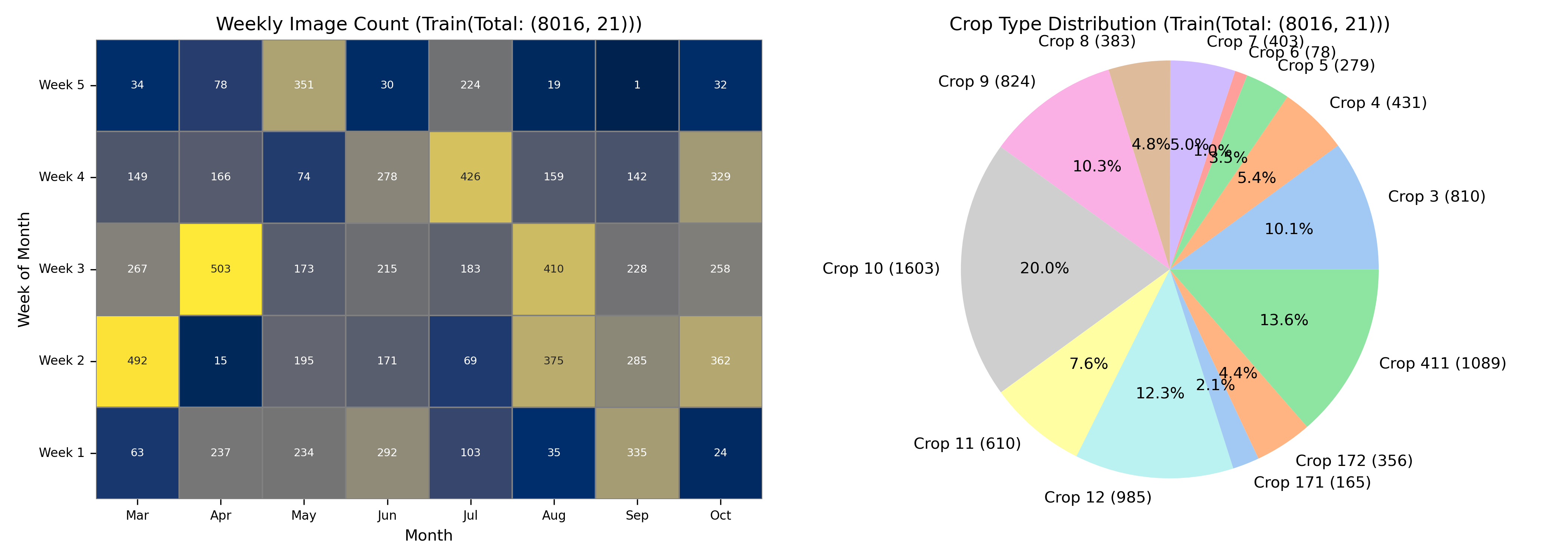

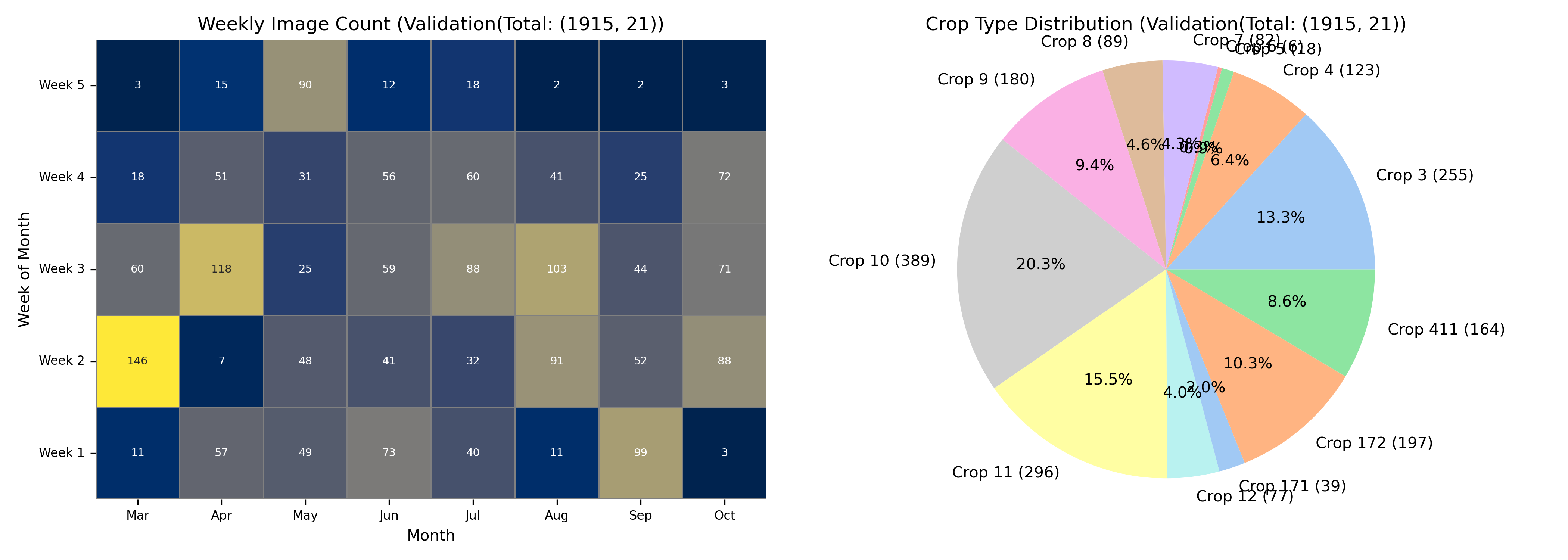

Temporal Balance: Ensuring that images from all months of the growing season are adequately represented in the training data to capture the full phenological cycle.

Crop Type Balance: Distributing all relevant crop types more or less equally across the dataset splits.

Field Balance: Including a maximum number of unique fields in the training set and ensuring these fields are not repeated in the validation set. This avoids introducing bias, where the model might learn specific field characteristics rather than general crop features.

These balancing and splitting operations generate the final training and validation datasets and can be executed by Jupyter running the notebook 0_5_TrainTestSplit.ipynb in the folder ./1_Preparation/ . The distributions of the balanced datasets are presented by pie charts and calendar plot.